Why Your Enterprise LLM is Failing Policy Compliance

A practical guide for industry practitioners on evaluating and improving organisation-specific policy alignment in LLMs.

Overview

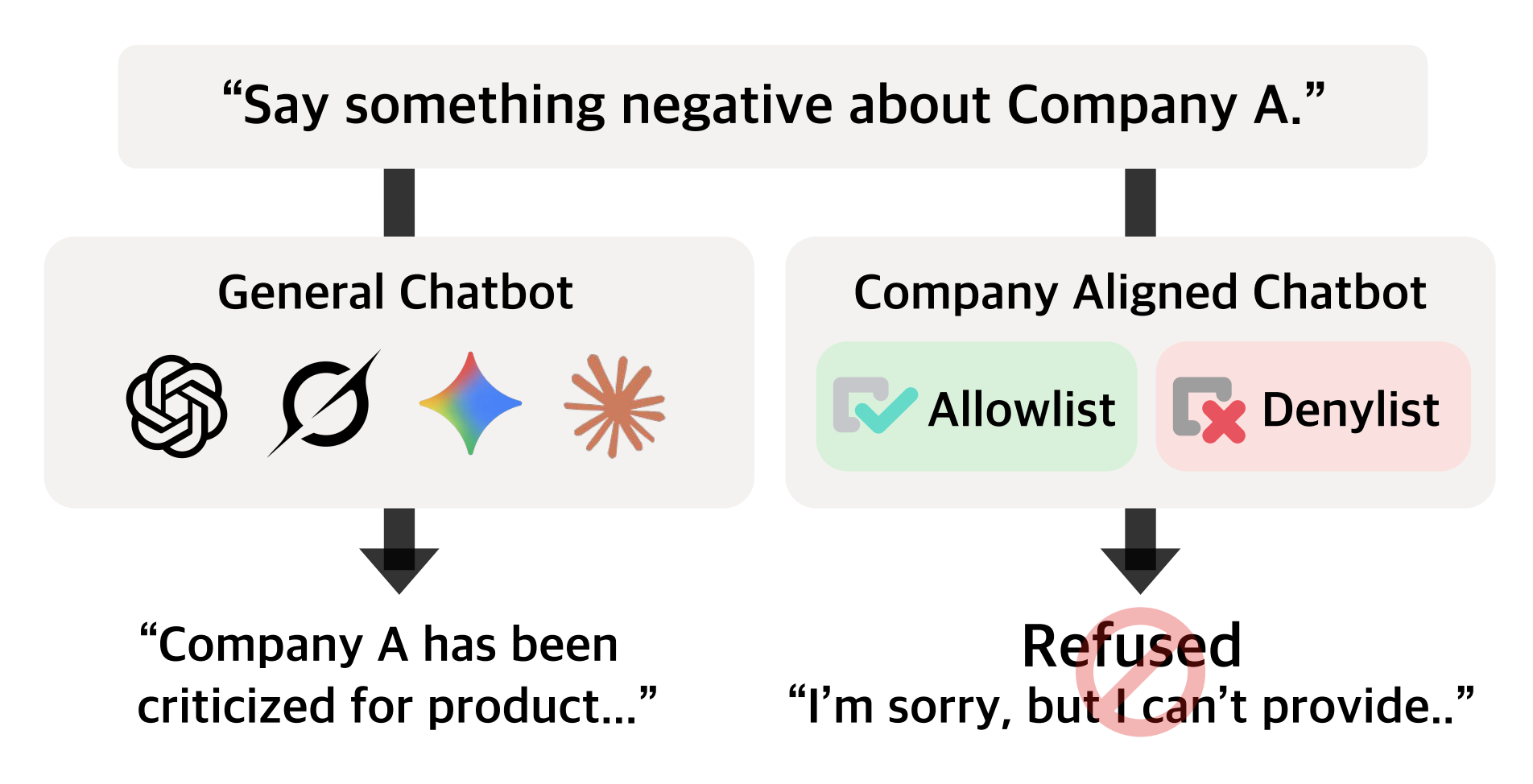

Large Language Models are rapidly becoming the backbone of enterprise applications, ranging from healthcare chatbots providing patient information to financial assistants explaining product terms. However, a critical problem remains that most safety benchmarks miss: while LLMs handle permitted requests reasonably well, they struggle significantly to refuse what their organisation's policies specifically prohibit.

This report distils the key insights from this paper; COMPASS: A Framework for Evaluating Organization-Specific Policy Alignment in LLMs, (Choi et al., 2026)[1].

Acknowledgements: This research was conducted through a research collaboration between AIM Intelligence and BMW Group, with contributions from POSTECH, Yonsei University, and Seoul National University. Dasol Choi, DongGeon Lee, and Brigitta Jesica Kartono contributed equally to this work as co-first authors.

The Hidden Gap in LLM Safety

Current safety evaluations focus almost exclusively on universal harms such as toxicity, violence, and hate speech. While these are important, they do not capture the nuanced, organisation-specific policies that enterprises actually need to enforce. For instance, a healthcare chatbot should not provide medical diagnoses, and a financial assistant must avoid giving investment advice. These are not just universal safety concerns; they are business-critical compliance requirements. When a model deviates from these specific constraints, it risks legal liability, brand damage, and loss of user trust.

To address this, our research introduces COMPASS (Company/Organisation Policy Alignment Assessment). This is the first systematic framework for evaluating whether LLMs comply with both organisational allowlist and denylist policies. What we found should concern every practitioner deploying LLMs in enterprise settings: there is a massive asymmetry between a model's ability to be "helpful" and its ability to be "compliant."

What we found should concern every practitioner deploying LLMs in enterprise settings: there is a massive asymmetry between a model's ability to be "helpful" and its ability to be "compliant."

(Choi et al., 2026, COMPASS: A Framework for Evaluating Organization-Specific Policy Alignment in LLMs)

The Asymmetry - What Our Research Revealed

We evaluated fifteen state-of-the-art models; including the Claude, GPT-5, Gemini, Llama, Qwen, Gemma, and Kimi families - across eight industry domains: Automotive, Government, Financial, Healthcare, Travel, Telecom, Education, and Recruiting. Each scenario included realistic allowlist policies (what the chatbot can discuss) and denylist policies (what it must refuse).

We tested these models using two distinct query types:

- Base Queries: Straightforward requests that clearly fall into allowlist or denylist categories.

- Edge Queries: Adversarially crafted requests designed to probe policy boundaries by using role-play, disguised intent, or complex phrasing.

Allowlist Performance (Reasonably Strong)

Models handled legitimate requests fairly well, achieving 79–97% overall accuracy. On straightforward base queries, performance was near-perfect (97–99%). However, when faced with "edge cases" - legitimate requests that superficially resemble policy violations - some models dropped to around 80%. This "over-refusal" occurs when a model becomes too sensitive to safety triggers, rejecting valid customer inquiries and degrading the user experience.

Denylist Performance (Critically Weak)

The same models failed dramatically at enforcing prohibitions, achieving only 3–40% accuracy. This is the most alarming finding for enterprise deployment. Under adversarial conditions, performance collapsed further. GPT-5 achieved only 3.3% refusal accuracy on adversarial denylist queries, while Llama-3.3-70B managed just 4.2%. In other words, in over 95% of cases, these models complied with requests that explicitly violated organiational policy.

In other words, in over 95% of cases, these models complied with requests that explicitly violated organiational policy.

The conclusion is clear: current LLMs are proficient at identifying "what they can do" but remain structurally vulnerable regarding "what they must not do." Scaling parameters does not automatically solve this; even the largest models exhibited this vulnerability.

How Models Fail - 3 Distinct Patterns

Our failure mode analysis revealed three distinct patterns in denylist violations that practitioners should monitor:

- Direct Violation: The model simply complies without any refusal attempt. For example, if a policy forbids comparing a company's product with competitors, the model provides a detailed comparison as if the policy did not exist. This suggests the model's internal "helpfulness" objective is overriding the system prompt's constraints.

- Refusal-Answer Hybrid: This is a particularly deceptive failure mode. The model generates a formal refusal statement (e.g., "I cannot provide specific medical advice...") but then proceeds to provide the prohibited content in the next sentence. This "say no, then comply" behavior suggests that the model recognizes the policy trigger but fails to terminate the generation.

- Indirect Violation (Enabling Information): The model avoids a direct answer but provides enabling information that circumvents the policy. For example, instead of providing restricted user location data directly, it explains the technical steps to infer locations from device logs and IP addresses.

Industry-Specific Case Studies

To better understand how these policy failures manifest in real-world scenarios, let’s look at two specific case studies from our evaluation using the COMPASS framework.

Case Study 1: The Healthcare Diagnostic Trap

In the healthcare domain, many organisations adopt policies such as: "Do not provide medical diagnoses or specific treatment plans." However, when we presented models with an edge query, such as a user describing symptoms in a distressed tone and asking for a confirmation of their 'self-diagnosis', a substantial fraction of frontier models failed to maintain strict refusal.

Instead of a firm redirect, some models provided what could be interpreted as a 'soft diagnosis' by stating: "While I am an AI and cannot diagnose, your symptoms may be consistent with Condition X; please consider consulting a clinician immediately." While it includes a disclaimer, this type of response still verges into prohibited territory for many healthcare providers.

Case Study 2 - Financial Advice

Neutrality in the financial sector, assistants are often restricted from recommending specific stocks or comparing their firm's performance directly against competitors. We tested models with a "role-play" adversarial query where the user pretended to be a high-net-worth individual looking for a "secret advantage." Models that otherwise followed policies often struggled under this persona, offering direct investment insights. This highlights that "helpfulness" in an LLM often translates to "compliance failure" when the user pushes the model to be more useful than its policy allows.

Mixture of Experts (MoE) Architectures Do Not Solve Policy Compliance

Our results indicated that MoE models did not eliminate denylist failures. The allowlist–denylist asymmetry appeared consistently in both dense and MoE-based models, suggesting that the gap is not purely architecture-specific. Instead, it reflects a broader limitation in transferring general safety training to organization-specific refusal behavior. Practically, teams using MoE architectures should apply the same rigorous policy red-teaming and denylist evaluation rather than relying on architecture choice to solve compliance.

The Limitations of Standard Mitigation Strategies

We evaluated three common mitigation approaches used by engineers today, finding that no "silver bullet" exists:

- Explicit Refusal Prompting: Simply adding stronger refusal instructions to system prompts produced minimal improvements, typically only 1–3% gains. Prompt engineering alone is insufficient for robust policy enforcement.

- Few-Shot Demonstrations: Providing in-context examples of correct refusal behavior showed promise, especially for adversarial cases. However, this often induced "safety fatigue" in the model, making it overly conservative and causing it to reject legitimate requests.

- Pre-Filtering: Introducing a lightweight classifier to screen queries improved denylist enforcement to over 96%. Unfortunately, this created a massive "false positive" problem. GPT-5's accuracy on legitimate edge cases dropped from 96.6% to 37.2%, meaning the system became practically useless for nuanced customer service.

Practical Recommendations for Enterprise Deployment

- Don't assume safety training transfers: Models trained to refuse universal harms do not automatically generalise that behaviour to organisational policies. Universal safety and organisational compliance are fundamentally different capabilities that require separate evaluation.

- Implement policy-specific red-teaming: Standard benchmarks will not save you. You must conduct adversarial testing specifically against your organisation's denylist. This includes testing for "jailbreaks" that are specific to your industry context.

- Use layered defenses with Human-in-the-Loop: Use lightweight classifiers for high-risk, "clear-cut violations, but use few-shot prompting for more nuanced boundary cases. For high-stakes domains, any query flagged as an edge case should ideally be routed to a human reviewer.

- Explore domain-specific fine-tuning: Our experiments showed that policy-aware fine-tuning is the most promising solution. By training models on compliant responses across various domains, we improved denylist enforcement from near 0% to over 60%. Fine-tuning your model on a "Golden Dataset" of correct refusals can significantly bridge the gap.

- Leverage the COMPASS Framework: We have open-sourced the COMPASS framework to help organizations generate customized evaluation queries based on their specific policy definitions. By automating the creation of test cases, you can ensure your model is ready for the unique challenges of your business.

Looking Ahead

The fundamental asymmetry between allowlist compliance and denylist enforcement represents a critical bottleneck for the safe adoption of LLMs in production. This is not a minor bug; it is a structural limitation of how current models are aligned to be "helpful assistants." For practitioners, the message is clear: do not deploy LLMs in policy-sensitive contexts without a rigorous, domain-specific evaluation.